Reinforcement learning [ RL ] It is replica of the trial and error learning technique that humans employ in order to accomplish their goals. Software actions that help achieve the objective get rewarded while actions that are in opposition to your objective will be discarded.

RL algorithms userewardandpunishment paradigm as they process data. They learn from the results of each action and they discover methods to handle data in order to achieve the desired outcomes. They also have the capability of putting off gratification.

Yes it is possible that the most effective approach might be compromise at first consequently the most accurate strategy they devised might involve some penalty or even reversing the direction of travel. RL is successful approach to ensure that artificial intelligence AI systems to achieve maximum return even in uncertain scenarios.

What are the benefits of reinforced learning?

There are numerous benefits of using the method of reinforcement learning (RL). Three are most well known.

Excel is used to solve difficult circumstances.

RL algorithms can be utilized when faced with complex scenarios that require many rules and dependencies. In the same way humans could not have the capacity of discern the right option regardless of the knowledge they have of their surroundings. Modelfree RL algorithms can change quickly to the environment and devise methods to optimize the payout.

Requires less human interaction

The conventional ML algorithms humans have to label the data pairs so that they can allow the algorithm to be directed. For the RL algorithm labeling of data pairs isnt needed. The algorithm can learn itself. It also has mechanisms that include input from humans which allows systems to be able to learn from individual preferences as well as adjustments.

is designed for long term goals

RL is mostly focused in the direction that maximizes reward. This makes it suitable for scenarios where actions are coupled with the long term impact. Its especially suited to real world situations where feedback isnt constantly available because it is learning by watching the reward level of delayed time.

In this way the decisions made regarding energy use storage or even energy sources could be long term in the nature of things. RL can be used to increase efficiency of energy use over the long run as well as reduce expenses. If properly planned RL agents are also able to adapt their methods of learning for similar however not the same jobs.

What can be the use of examples to enhance the learning process?

Reinforcement learning [ RL ] is strategy that can be utilized to tackle wide range of use cases. In the following section well provide some instances.

Marketing personalization

In applications like recommendations systems RL can create suggestions that are tailored to certain users in line with their interactions in the systems. The result is better customized experiences. For instance an application could display advertisements to customers based on specific information about their demographics. When an ad gets clicked the app learns which ads to display during the period of the users interaction which can increase the sale of the product.

Optimization challenges

Traditional methods of optimization tackle issues through the analysis of possible solution based on certain number of. But RL incorporates the learning of interactions for the purpose of determining most effective or comparable solution as time goes by.

An online tool for optimizing spending utilizes RL to change to meet changing requirements on resources and choose the best kinds of sizes for instances and configurations. The tool makes decisions based on elements like the availability and the current state of cloud infrastructure expenses and the utilization.

Forecasts of the future of financial

They are also complex. possess the qualities of statistical analysis which change over the course of the time. RL algorithms can optimize long term returns by looking at charges for transactions and then adjusting to market changes.

A computer program for example could be able to analyze the rules and patterns in the market before it conducts trial of its decisions and records the profit. The algorithm is able to develop an appropriate value function is then able to devise strategies to boost profits.

What is the reinforcement learning role?

The processes of learning which are an element of the reinforcement RL ] algorithm is similar to human and animal reinforcement learning within the domain of psychology that deals with behavior. For instance child could find that they get praise from parents when they help siblings or tidy up but they will be target for unfavourable reactions whenever they throw objects at them or shout. They will eventually discover the right combination of their actions to result at the conclusion of each day and will be reward.

An RL algorithm is like an learning process. Yep It can be used to test diverse tasks to learn both the positive and negative aspects in order to achieve what it wants to achieve in terms of reward.

Concepts of the key

In the case of reinforcement learning there are several principles that you must become familiar by:

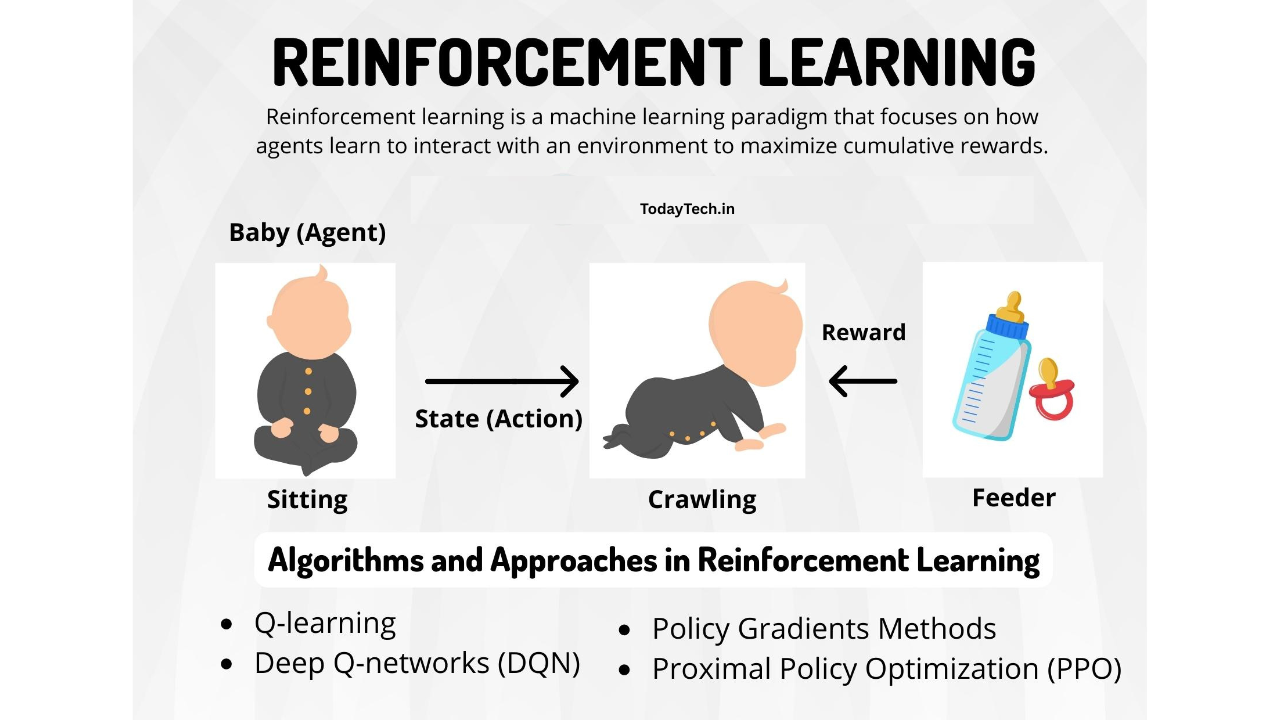

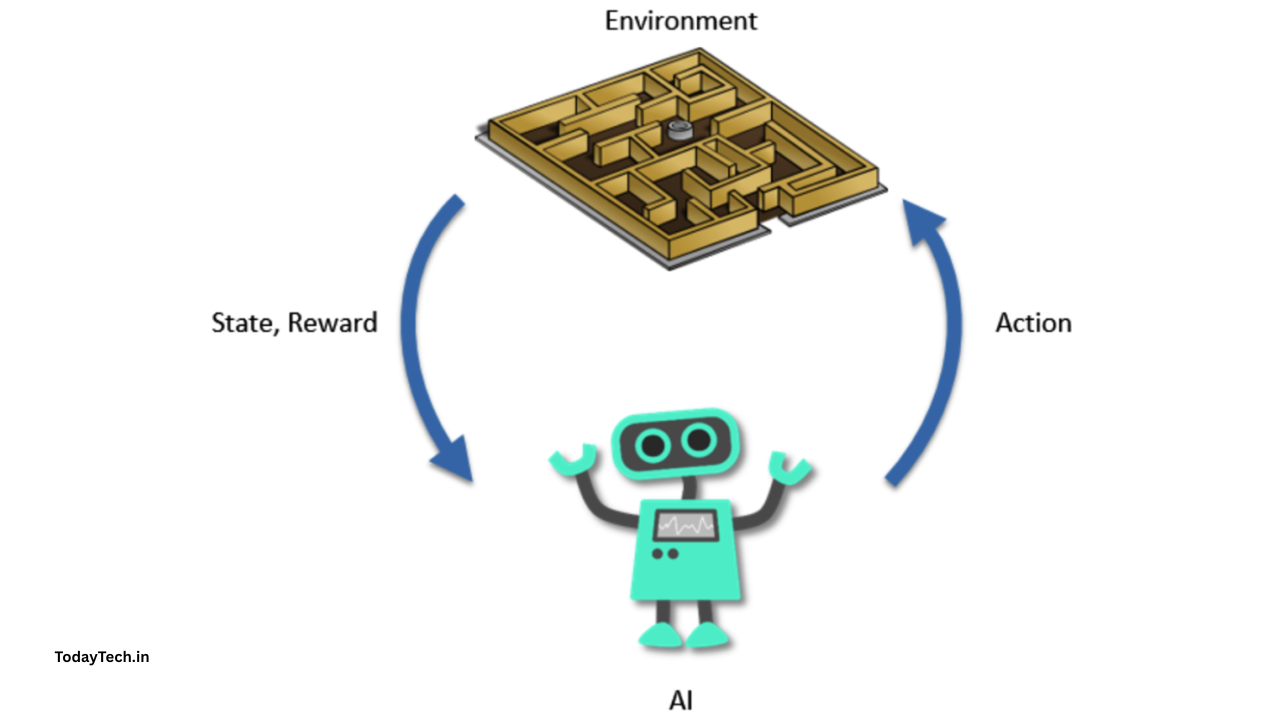

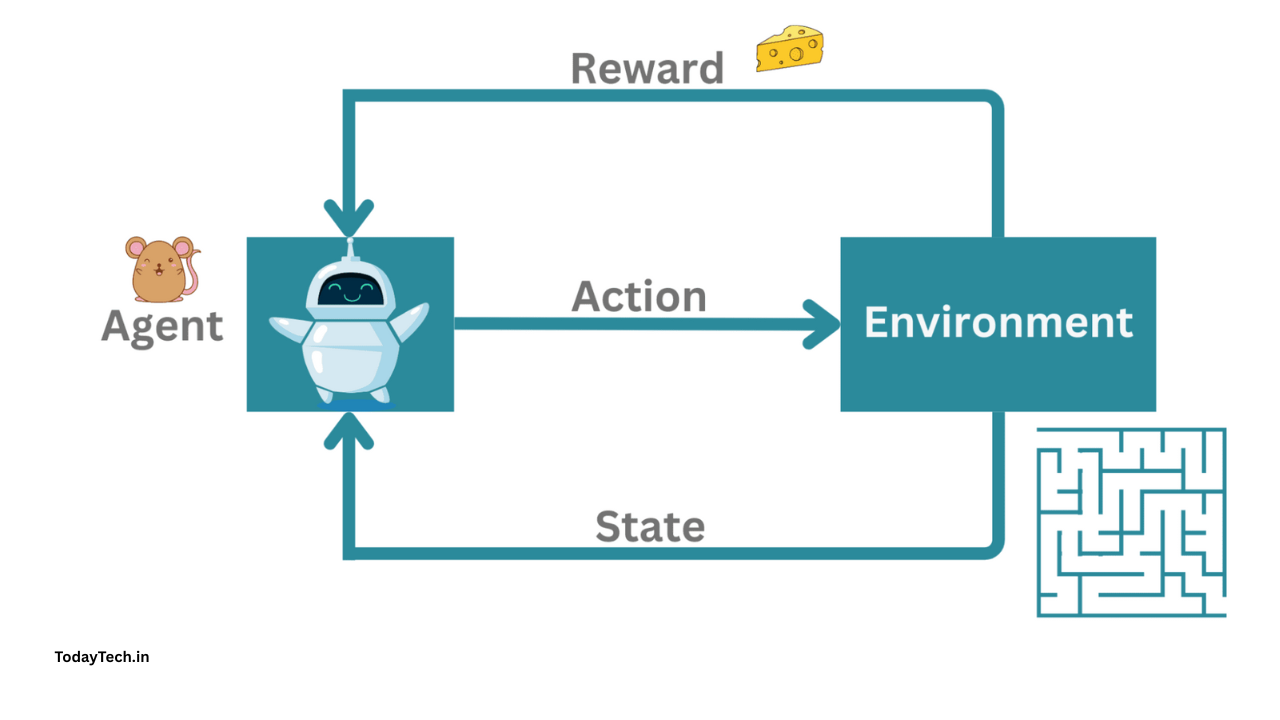

* The Agent is the algorithm used to create ML or an autonomous system].

* The environment space is the dynamism space which includes elements like boundary values the rules of variables in addition to legal acts

* This act is method an RL agent employs for moving through the environment

* condition is the situation at any particular moment in time

* Rewards can be positive or negative or has no worth in other words its reward or punishment to perform an action.

* The cumulative reward is the sum of all rewards or the amount at the conclusion

The basic algorithms

Reinforcement learning is an approach which is based upon an understanding of the Markov decision process. Yep its the mathematical representation of decisionmaking which employs discrete time steps. When particular step is completed the agent is able to perform an act of different nature which results in new state. The same is true for that present condition can be attributed to the sequence of previous actions.

Through the use of experimenting and moving through the environment the agent creates the rules or guidelines. The rules assist the agent to determine which steps must be followed next in order for the greatest reward. The agent also has to decide between continuing to explore the surroundings for benefits from state actions or choose actions known to be successful and have high rewards within particular state. This is called the exploration tradeoff.

What are the various types of algorithmic reinforcement?

There are variety of algorithms used to study the subject of reinforcement learning (RL) including Qlearnings policy gradient techniques Monte Carlo techniques and temporal differentiation learning. Deep RL is the application of deep neural networks for reinforcement learning. An excellent example of deep RL algorithm is TRPO which stands for Trust Region Policy Optimization ([TRPO]]. TRPO Trust Region Policy Optimization [ TRPO].

The whole collection of algorithms can be classified into two kinds.

Modelbased RL

Model based RL is typically used in instances where the surroundings are well defined and stable and testing in real world conditions can be challenging.

The agent starts by creating the model inside of its environment. The process used to create this model

- It takes part in activities that are carried out within its environment and records its surroundings and the rewards.

- It links the condition of an action with the rewards.

Once the model has been completed The agent will then run simulations of the sequence of steps based on the possibility of getting maximum total rewards. It is then followed by assigning values to the sequences too. The agent is then able to come up with various strategies to achieve the goal.

Example

Consider robot that is who is learning how to navigate the structure in order to access an area. In the beginning the robot is in its own direction and develops an internal representation (or plan of the space. It might for example find itself near an elevator when it moves about 10m away from the point of access. Once it has constructed an outline of the structure it is equipped to create set of shortest path sequences between the different locations It frequents within the building.

Modelfree RL

The model free RL is ideal to use when the test area is complex and cannot be easily described. Yep it is also advantageous if the test conditions arent known or change. The environment based test doesnt have any many negatives.

The agent cant create model of its own that is internal to the environment and their dynamic. Instead it employs an experimentation method in setting. It scores and tracks statesaction pair and the sequence of statesaction pair to formulate an outline.

Example

Consider self driving automobile that must maneuver through city traffic jams. Traffic patterns on the roads influence as well as the actions of pedestrians and myriad of other variables can make scene extremely lively and challenging. AI teams are taught how to instruct vehicles in virtual environment in the initial phase. The vehicle performs its actions according to its condition and is able to be punished or awarded.

Over years of traveling millions miles across diverse virtual environments the vehicle can learn what actions work best for particular situation however it is not able to explicitly model the entire vehicles mechanics. When the vehicle is brought into reality itll apply the policy but the system continues to improve the system by adding new information.

Whats the main difference between reinforced supervised in addition to non supervised education?

While theres the fact that there is no question that supervised learning as well as reinforcement and unsupervised learning [RL ] are three ML algorithms in the field of AI But there are some distinctive differences among the three algorithms.

Reinforcement learning in contrast to. Learning under supervision

If you are conducting supervision learning it is important to specify the information inputs as well as the outcomes. For instance you could focus on supplying images which have labels that distinguish between both dogs and cats and after that the algorithm has to identify newly born animal. It can be either pet or cat.

The algorithms of supervised learning detect connections and patterns between input and output. They then forecast the outcomes using the latest information about input. It requires the supervision of supervisor typically someone to identify every record in the data set to be used for training through an output.

But RL is clearly defined goal with regards to the desired result but there isnt person in charge who can label the information before it is received. In the event of training rather than mapping inputs to the outputs which are identified the outputs are in line with inputs. By rewarding desired behaviors and then weighing them against ideal result.

Reinforcement learning as opposed to. Learning that is not controlled learning

Unsupervised learning algorithms are able to take inputs but dont have the results being defined in the process of learning. They discover intricate patterns and connect the data and combine techniques from statistics.

For instance you could provide variety of documents and the algorithm might classify them in categories it believes are related those within the text. The algorithm is not able to provide any specific result.. The results are of an arbitrary interval.

However RL is governed by specific final target. While it uses an exploratory method its methods are continually evaluated and improved to increase the chances of reaching the target. Yep it can teach it how to produce extremely exact result.

What are your concerns in the area of reinforcement learning?

Though the techniques that reinforcement learning use of RL could revolutionize the world however it may be challenging to make these strategies work.

Practicality

Experimenting with real world reward and punishment strategies might be unattainable. If drones were to be used that operate in real world conditions simulator there would be an abundance of unreliable drones. Real world environments change frequently rapidly and without warning. It can make it make it more challenging for an algorithm to be effective in the real environment.

Interpretability

As with any other type of study Data science also looks at the results of definitive research to establish rules and standards. Scientists in data science want to know exactly what was the procedure used in order to reach certain the conclusion. They want to test it and reproduce.

When it comes to complex RL methods the reasons behind why the order in which particular sequence of operation has been chosen can be challenge to discern. Which of the steps will result in the greatest results? Its difficult to identify which steps could result in problems during the implementation.